Recent Changes

Here is a list of recent updates the site. You can also get this

information as an RSS feed and I announce new

articles on Fediverse (Mastodon),

Bluesky,

LinkedIn, and

X (Twitter)

.

I use this page to list both new articles and additions to existing

articles. Since I often publish articles in installments, many entries on this

page will be new installments to recently published articles, such

announcements are indented and don't show up in the recent changes sections of

my home page.

Tue 02 Jun 2026 11:21 CEST

Martin Fowler

Greg Wilson has noticed that lots of folks are using dodgy metrics to figure out if AI tools are worth their costs.

Would you measure lines of code generated, or tickets closed? Or would you send out a survey asking whether developers feel more productive? Each of those approaches is flawed in a different way;

He lists lots of common metrics, and why they are flawed. Sadly he doesn’t give any suggestions on what would be better. In my view, since we cannot measure productivity, any metrics are weak evidence at the best of times.

I do somewhat use one of his flawed measures: “Asking Developers If They Feel More Productive”. While I acknowledge the problems he gives with this measure, I find that in an environment where decent measures are hard to find, even such a dim light is the best we have. In this situation these kinds of qualitative metrics may not be conclusive, but they are useful.

❄ ❄ ❄ ❄ ❄

Benedict Evans observes that extensive automation didn’t mean the demise of professions in the past.

we spent a century automating accounting: we built calculating machines, punch cards, mainframes, data processing, databases, PCs, spreadsheets, ERPs, cloud… in fact, we built half of the tech industry around automating this. Yet the number of accountants kept going up.

He goes into the myriad of problems that exist when we’re trying to forecast the impact of a technology on jobs. There’s the much-talked-about Jevons paradox - once something becomes cheaper, people do it more, which can increase demand. Often this leads to the nature of jobs changing, even if it’s called the same thing.

Accountants today aren’t doing exactly the same work that they did in 1970 or 1980 ‘but more’ - they’re still called ‘accountants’ but the job is different. New technology often starts out being used for ‘the old thing but more’, but it rarely ends up like that.

Technologies often affect whole businesses - consider the impact of the internet on news publishing. Did anyone observing the rise of smart phones in the early 2000s realize that a consequence of this would change the economics of taxis due to the rise of ride-sharing apps? The conclusion is that it is, at the very least, almost impossible to forecast the impact of AI on our work.

❄ ❄ ❄ ❄ ❄

Stephen O’Grady looks at how closed and open models have performed on benchmarks over time.

Closed models are setting the pace of innovation, and constantly breaking new ground from a capabilities standpoint. Open models are chasing them, and the cycle times seem to be getting shorter. There are no clear capability moats, and what is frontier today is table stakes tomorrow.

It tooks 13-18 months for open models to catch up to GPT-4 on these benchmarks, but only 2-7 months to catch up to GPT-4o.

There’s a bunch of caveats to this analysis, that he lists, but it’s a worthwhile survey of how various kinds of models perform against the various measures we are trying to assess them with.

❄ ❄ ❄ ❄ ❄

One of the starkest examples of sloppy AI use is hallucinated citations - a give-away of both usage of LLMs and carelessness driving them. GPTZero is a company that makes tools to detect AI writing. I’ve no insight as to whether their tool is effective or not, but they do publish investigations of AI usage, and have published several articles highlighting hallucinated citations. One post focuses on Ernst & Young Canada’s report on cyber threats to loyalty systems and found that more than half its references were hallucinations. The post uses a lot of extremely annoying animations in how it presents its information (breaking Safari’s reader mode in the process). But the harm that these kind of AI generated reports can do goes further than just some misled humans:

Publishing a report online is essentially a form of data injection into the pool of knowledge that is the internet. When the report includes fake information (either vibed citations or false claims) it can “poison the well” by misleading future researchers, especially if the report is published by a well-known consulting firm and hosted on a high-traffic website.

❄ ❄ ❄ ❄ ❄

As LLMs get more capable in programming, we are rightly worried that people will use them attack software systems. But these models can also be used for defense, allowing teams to find bugs before attackers do. Some folks from Mozilla posted an article on how they’ve used AI model to identify and fix an unprecedented number of latent security bugs in Firefox.

Just a few months ago, AI-generated security bug reports to open source projects were mostly known for being unwanted slop. Dealing with reports that look plausibly correct but are wrong imposes an asymmetric cost on project maintainers: it’s cheap and easy to prompt an LLM to find a “problem” in code, but slow and expensive to respond to it.

It is difficult to overstate how much this dynamic changed for us over a few short months. This was due to a combination of two main factors. First, the models got a lot more capable. Second, we dramatically improved our techniques for harnessing these models — steering them, scaling them, and stacking them to generate large amounts of signal and filter out the noise.

During 2025, there were 17-31 security bugs fixed each month. In April 2026, they fixed 423.

❄ ❄ ❄ ❄ ❄

Pavel Voronin riffs on Unmesh Joshi’s post on What is Code. He observes that cruft in a codebase (technical debt) has always added friction to software development. But the consequences of this cruft are compounded when LLMs are using existing code as context for future work.

In a degraded codebase, the model does not see “technical debt” as debt. It sees examples. It sees precedent. It sees a style to continue.

LLMs multiply what’s currently happening. I hear reports that good code might take the place of much of what’s put in markdown, because LLMs will imitate what’s already in the code base. But bad code multiplies too. Inevitably he introduces another variation of rampant debt metaphors:

Cognitive debt accumulates when a team uses abstractions it no longer understands. Generative debt accumulates when a codebase contains confused concepts that models are likely to continue.

Cognitive debt is about what the team no longer understands. Generative debt is about what the model is now likely to reproduce.

❄ ❄ ❄ ❄ ❄

Jason Koebler, from the very worthwhile 404 media, has written a plaintive essay on how AI-generated slop is driving us crazy. Not just because its filling the web with this slop, but also because how it’s making us humans react to slop and the threat of slop. We review our own writing and notice: it’s not just reading AI slop that hurts us, it’s the risk that we write something that looks like AI slop. If I use phrasing that AI copied from me, does it seem like I’m copying AI? This has led to the appearance of “humanizers” - AI tools that make our writing look less like AI.

Humanizers add typos, randomly replaces words, removes “AI tells,” and sometimes inserts random characters.

It’s another step on the way to the Zombie internet:

I called it the Zombie Internet because the truth is that large parts of the internet are not just bots talking to bots or bots talking to people. It’s people talking to bots, people talking to people, people creating “AI agents” and then instructing them to interact with people. […] It’s my email inbox, in which I used to occasionally get poorly-formatted, poorly written, extremely long emails from delusional people who were positive the CIA had imprisoned them in a virtual torture chamber using undisclosed secret technology but where I now get well-formatted, passably written, extremely long emails from delusional people who are positive they have proven AI sentience and have the AI transcripts to prove it.

❄ ❄ ❄ ❄ ❄

Andy Osmani points out that spawning lots of agents is like launching a bunch of parallel processes that all rely on a single orchestrating thread - yourself.

Python has the Global Interpreter Lock (GIL). You can spawn as many threads as you want but only one executes python bytecode at a time because they must acquire the lock. You are the GIL of your AI agents. They all can run at once. But when any of their work needs genuine understanding of the architecture or resolving merge conflicts, that work has to acquire the lock. There is one lock. You hold it.

This means you must design the workflow with the agents with that GIL in mind. You shouldn’t launch more agents than you can properly review. It’s handy to separate background tasks that can be offloaded to an agent from complex tasks that require applied attention. Don’t use that precious brain for things that the machine can verify itself. [And I’d add - do get the machine to build tools that ease human verification. For example, it’s better to surface test case data in tables rather than buried in assert statements.]

Spawning agents is not the skill. Anyone can run 20.

The real skill is designing the system around the one serial resource that cannot be cloned or parallelized. That resource is your attention.

❄ ❄ ❄ ❄ ❄

Jamie Hurst is a Principal Engineer at booking.com, where he works in developer experience with a focus on AI tooling. He’s written realistically about the gains and losses of using LLMs in this work.

The cost of building has collapsed, but the cost of aligning organisationally has not. If anything, it’s gone up. When three different teams can each produce a working solution to the same problem in the time it used to take to write a proposal, the bottleneck moves from engineering to coordination.

He thinks he’s able to do more as a senior engineer, but is concerned about how sustainable it is, both for him personally and for the organization he works for. He’s able to shape directions for multiple workstreams at once, in a way that he couldn’t three years ago. But one loss is that he doesn’t have enough time for mentoring, which will exact a toll on his employer in the longer term. He also finds he doesn’t have enough time to think.

The productivity gains from AI got captured by output volume rather than output quality. The org’s expectations rose to absorb the speed-up, and the slack that used to exist between tasks, the unstructured time where strategic thinking actually happens, got eaten first because it’s invisible on a dashboard. I’m at a point in my career where thinking is supposed to be most of the job, and most of it now happens on holiday because the working week doesn’t accommodate it.

Wed 27 May 2026 15:40

Martin Fowler

At the GOTO Conference in Copenhagen in 2025, Kent Beck and I spent some time on stage talking and answering questions from the audience - a format I refer to as “two old geezers on a park bench”. We talk about our experiences with LLM-augmented programming (at that point - October 2025), we show our frustration that things we’ve been saying for thirty years still need to be said, we say how anything like a manifesto reunion needs to be led by a younger generation, and opine on what junior developers should be focusing on in their career.

❄ ❄ ❄ ❄ ❄

Ian Johnson has written a series of posts about restructuring a gnarly codebase

The story follows a real Laravel + React codebase over ~3 months and ~258 commits from a legacy monolith with no tests to a well-structured application with automated quality gates, a React SPA migration in progress, and an AI agent that reliably ships production code with minimal supervision.

The series covers the steps in decent detail, and his approach follows the kinds of steps I’d use. First get everything under the control of decent characterization tests, add static analysis, introduce the right patterns to make things flow easily.

With all of this, is his use of AI, which changed during the exercise:

For the first two months of this project, I used Claude Code with auto-approve turned off. Every file edit, every terminal command, every change… I reviewed it before it executed. […] The results were good. The code was clean. But I was doing most of the thinking and half the typing. The agent was a fancy autocomplete with better suggestions. I wasn’t getting the leverage I’d hoped for.

I read an article about “on-the-loop” versus “in-the-loop” human-AI collaboration. The framing clicked immediately […] I was micromanaging because I didn’t trust the agent to do the right thing. And I didn’t trust the agent because there was nothing forcing it to do the right thing.

His early steps put in tests, static analysis, and the right architectural patterns. With those in place, he could let the agent do more work.

My role shifted from writer to curator. I don’t write most of the code anymore. I Define the patterns […] Review the test specs […] Review the output […] Update the harness […] Make strategic decisions […]

He finishes the series with conclusions about how he’d generalize his experience to other circumstances.

❄ ❄ ❄ ❄ ❄

Back in the land of my birth, there was some notable groans when the National Health Service decided to close nearly all of their Open Source repositories, supposedly to the security threat of LLMs. Closing repos like this isn’t an effective counter to LLM-augmented attackers. I suspect it’s no coincidence to see GDS (Government Data Services), the highly-regarded IT enablers in the UK government publish their position

Moving code from public to private as a substitute for investment in secure-by-design delivery, ownership and remediation is a warning sign because it reduces sharing and scrutiny, can slow coordinated improvement across government and suppliers, and does not remove the underlying weaknesses in a running service.

Terence Eden memorably sums up his view on this:

Within the UK’s Civil Service you occasionally hear the expression “being invited to a meeting without biscuits”. It implies a rather frosty discussion without any of the polite niceties of a normal meeting.

❄ ❄ ❄ ❄ ❄

I’ve seen a few cases where those developers who are most involved in working with LLMs find they are running into a problem with cognitive endurance, Adam Tornhill has joined this group:

One of the big wins with agents is that they let us stay with the higher-level problem for longer. We get less sidetracked by details, dependency cleanup, and similar secondary tasks that used to break concentration.

But there is a cost we are still underestimating. Agentic coding is mentally expensive.

I can usually sustain the pace for a couple of hours. Then I need a break. The pace is simply too intense. And based on conversations with other engineers, I do not think I am alone in that.

He explains that working with The Genie means we are making more decisions in less time, this increase in decision density is hard on the brain.

He responds by keeping agent tasks small, automating everything he can, and accepting that he won’t know every line of code as long as he has good verification mechanisms in place.

Notably, he has not gone in the direction of doing his work with swarms of agents that he coordinates. Instead has one long-running task that he babysits and one focus task

That last point is important given the running-twenty-agents-in-parallel hype. I cannot even think about twenty meaningful things to build, and even less so about the resulting cognitive tax of the likely interruptions. It’s exactly the wrong thing to even consider. At least for humans. (And yes, I understand sub-agents and machine parallelisation. That is not what I’m objecting to. It is the parallelisation of human attention that does not scale).

I liked that he included some thoughts about what folks can do in time outside this intense programming time. Not just “have a coffee” (although he includes that) but also about learning about the domain that the software supports.

❄ ❄ ❄ ❄ ❄

A couple of pithy quotes from social media

Lorin Hochstein

“Metaphor debt” is when all of your metaphors involve the concept of “debt” because you can’t think of any other metaphors anymore.

❄ ❄

Daniel Terhorst-North

If a vegan crossfit fan is using Claude to write Rust, which thing do they tell you first?

❄ ❄ ❄ ❄ ❄

Karl Bode reacts to speakers getting booed when mentioning AI during commencement addresses. He points out that younger folks are increasingly unhappy with the tech oligarchy and their fruits.

The thing is the kids aren’t stupid. They see the field clearly. They see the difference between what’s being sold to them by tech companies, the press, and commencement speakers, and what they have repeatedly seen with their own eyes.

They’ve watched tech oligarchs spend the last decade mired in scandal after scandal, hype cycle after hype cycle, steadily enshittifying everything they touch along the way.

[…]

The percentage of Gen Z that think AI’s benefits don’t counterbalance the risks now sits around fifty percent, up 11 percentage points in just the last year. Eight out of every ten believe that using AI makes the process of actual learning more difficult.

He sees young people saddled with the perception of entering a worsening world -

which leads them to rage against this latest fruit of the tech oligarchy. A rage

that is easy for folks like me

- with a comfortable retirement off-ramp - to properly appreciate. A rage that could have marked political and social consequences.

❄ ❄ ❄ ❄ ❄

Relevant to these concerns are a couple of items in last week’s Economist newspaper. The newspaper argues that historically major technological advances haven’t led to significant unemployment or drops in wages (paywalled article). The closest was the original industrial revolution in 19th Century Britain. There was a stagnation in wages during this period, but there was also a massive increase in population, from 4½ million to 12 million.

It also points out that we’ll probably only understand the full consequences of all this when a recession hits, as this is when most unproductive jobs tend to be flushed out of the system.

A second article (also paywalled) indicates that AI is having some effect on graduate hiring. They did an analysis of surveys of recent graduates, looking to see if employment varied depending on a job’s exposure to AI. The least exposed quintile of subjects saw employment rate fall by 1.5% over the last couple of years, while the most exposed quintile’s drop was 6.6%.

❄ ❄ ❄ ❄ ❄

Lawfare isn’t impressed with the latest efforts by the US Government to regulate AI.

On [last] Wednesday, the White House invited leaders of OpenAI, Google, Anthropic, Meta, and Microsoft to the Oval Office for a signing ceremony the following afternoon. President Trump was to sign an executive order on AI and cybersecurity—the administration’s most formal effort yet to establish a voluntary process for reviewing frontier models before their release. But roughly three hours before the ceremony, when some company executives were already in the air to Washington, the White House called it off.

They see the proposed regulations as mild, and including some valuable measures to harden defenses against cyber threats.

But it’s worth underscoring the implications of postponing (if not outright canceling) this order, which, by its own terms, was about as modest a frontier-AI intervention as the federal government could put on paper: voluntary, focused on the government’s own defenses, and explicitly barred from becoming a licensing regime. The objection isn’t so much about government coercion as about the government having any settled role at all. Voluntary, in other words, isn’t the floor of frontier AI policy in this administration; it’s the ceiling.

This is a questionable position given that the concerns animating this draft order will likely grow in the near future. It is also self-defeating for those who applauded the order’s delay or demise. Far from resolving the risk of government meddling in AI, killing the order just leaves in place what Ball has described as the “opaque and essentially lawless” alternative: government access happening through back channels, on terms set case by case, with no stable rules at all.

One of the problems here is a distinct lack of governmental expertise, either in AI or in software in general. Too much is being decided at the whims of the tech oligarchy, there isn’t any attempt to engage in the broader issues at hand. That’s not entirely a bad thing, trying to regulate something that’s still evolving so fast is usually a fool’s errand - but the problem here is the impact of AI is so big that there’s real danger in being too far behind.

❄ ❄

Which leads me to a rare thing, an endorsement of a candidate for political office. If you are voting in congressional district MA-06 (North Shore of Massachusetts), I’d seriously look at Beth Anders-Beck, who is running for congress in that district. Beth has a long background in software development (including developing the notion of Forest and Desert), so would introduce expertise that Congress desperately needs. I’ve known Beth for decades, and have a high opinion of their intelligence, judgment, and ability to work with others. Congress doesn’t deserve Beth, but it does need her.

Wed 27 May 2026 11:01

Martin Fowler

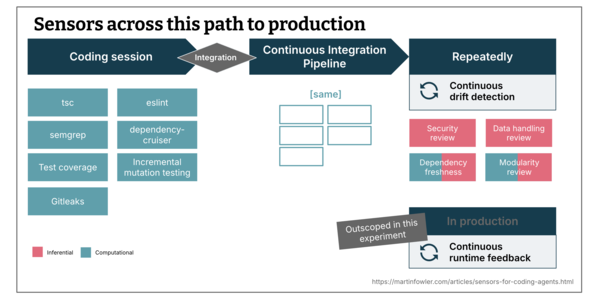

Birgitta Böckeler finishes her post on sensors for

coding agents by examining the role of a test suite as a regression

sensor, focusing on the role mutation testing can play.

more…

Wed 27 May 2026 10:03

Martin Fowler

Vibe coding has significantly accelerated software prototyping but AI

agents frequently recommend insecure configurations, creating security

problems. Gautam Koul, Lucian Moss, Neil Drew-Lopez, and Daberechi

Ruth Edeokoh share their experience while building applications

for Thoughtworks's global marketing. They learned that to combat this we

need to write a security context file to guide the AI, be cautious with AI

permission requests, create a daily security intelligence feed, and

provide builders with a secure-by-default harness and templates.

more…

Thu 21 May 2026 07:49

Martin Fowler

Vibe coding is building a software application by prompting an LLM, telling it

what to build, trying it out, prompting for changes - but without looking at

any of the code that the LLM generates. This technique can be used by people

without any knowledge of programming. However the resulting software often

shows problems with maintainability, correctness, and security - so is best

used for disposable software written for a limited audience.

The term was coined in February 2025 by Andrej Karpathy, an experienced

programmer, in a post on X:

There's a new kind of coding I call “vibe coding”, where you fully give

in to the vibes, embrace exponentials, and forget that the code even exists.

It's possible because the LLMs (e.g. Cursor Composer w Sonnet) are getting

too good. Also I just talk to Composer with SuperWhisper so I barely even

touch the keyboard. I ask for the dumbest things like “decrease the padding

on the sidebar by half” because I'm too lazy to find it. I “Accept All”

always, I don't read the diffs anymore. When I get error messages I just

copy paste them in with no comment, usually that fixes it. The code grows

beyond my usual comprehension, I'd have to really read through it for a

while. Sometimes the LLMs can't fix a bug so I just work around it or ask

for random changes until it goes away. It's not too bad for throwaway

weekend projects, but still quite amusing. I'm building a project or webapp,

but it's not really coding - I just see stuff, say stuff, run stuff, and

copy paste stuff, and it mostly works.

-- Andrej Karpathy

The key point about vibe coding is “forget that the code even exists”.

This is what gives it much of its usefulness, but also its limitations.

Since the November Inflection many programmers are

getting LLMs to write all their code, commenting that they may never write a

line of code directly again. However they do care about this code, reviewing

it, paying attention to its internal structure. In that case, they aren't

forgetting the code exists, so it's really a different thing that I call

Agentic Programming. Sadly the term “vibe coding” really

caught on, so many people use it to mean agentic programming. However I feel

that despite this rapid Semantic Diffusion, it's worth

trying to keep the concepts of vibe coding and agentic programming separate,

as they are both different to use and different in their consequences.

Because a vibe coder doesn't look at the code, they don't need programming

skills, so it's perfect for someone with no programming knowledge to build

applications for their own use. Experienced programmers may also find it handy

for rapid development of disposable software or prototypes.

Vibe coding

is still new, so we are exploring its limitations, and those limitations

change as the sophistication of models and their harnesses change. These

limitations do introduce considerable risks, particularly if the vibed

software is used widely or has access to sensitive information.

Perhaps the most serious risk is that of security. LLMs are inherently

vulnerable as they provide a large attack surface for predators. Vibe coded

applications can often expose sensitive information or worse, credentials to

attack deeper into an organization's systems. Even non-programmers need to be

aware of the Lethal

Trifecta.

With little attention to the code, vibed software can rapidly produce

many lines of code of a very low quality. Such code makes it difficult, even for an LLM,

to modify and enhance the software in the future. While it's possible that

growing LLM capabilities will allow it to work with even the largest bowls of

spaghetti software, thus far it seems clear that well-structured software

makes life easier for LLMs too.

LLMs are famous for habit of hallucinating incorrect facts and presenting

these with great confidence. This habit also leads them to create software

that behaves incorrectly - and those errors may not be manifest to the user.

Furthermore the non-determinism of LLMs means that it's likely that asking an

LLM to enhance some software could easily lead it to introduce errors, even in

parts of the code that shouldn't change due to the new request. We should thus

treat LLM-generated software with skepticism, it can still be useful, but we

need to be aware of the risks.

On the whole vibe coding software is best used for disposable software

that's only used by its author or a close group of collaborators who

understand and accept the risks involved. Code that is more complex, more

widely-used, and with more consequences to its risks should not be forgotten about.

Wed 20 May 2026 10:27

Martin Fowler

Birgitta Böckeler adds discussion of three more

sensors for static code analysis, focusing on checking and enforcing

better modularity. Computational sensors for dependency checks were good

at enforcing rules, but the rules were limited. Building a computational

sensor for coupling data proved lackluster. Prompting an inferential

sensor to review modularity was more effective.

more…

Tue 19 May 2026 12:38

Martin Fowler

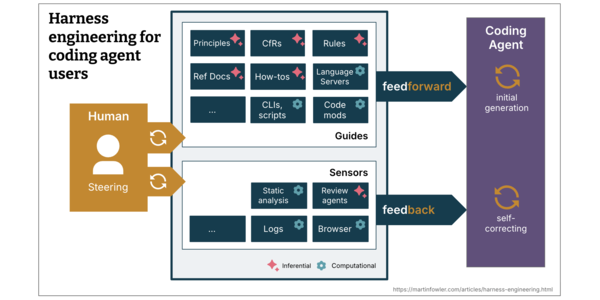

In her recent article about harness engineering for coding agent users,

Birgitta Böckeler laid out a mental model for expanding a

coding agent harness: a system of guides and sensors that increase the

probability of good agent outputs and enable self-correction before issues

reach human eyes. Birgitta has now started publishing an article where she

walks though her experiences using sensors to keep a codebase

maintainable. This part looks at static analysis with basic code linting.

more…

Thu 14 May 2026 17:52

Martin Fowler

Last week I spent a day at The Orchard Retreat, hosted by Mechanical Orchard. that brought together several people working in software development to talk about the profession’s future with the rise of agentic programming. The event was help under the Chatham House Rule, so I can’t attribute the comments and stories I heard. (If anyone recognizes themselves, and would like attribution, let me know.) Here are a few tidbits that caught my notebook.

❄ ❄

One group developed a behavioral clone of GNU Cobol compiler in Rust. The result is 70K lines of Rust and was built in 3 days. This is yet another sign of the ability of LLMs to do a good job of porting existing code to a new platform. Good regression tests are extremely valuable here (and I don’t know how good GNU Cobol’s are). There’s also the possibility of building a test suite if you have access an existing implementation.

❄ ❄

Large spec documents can be complex for a human to review. One attendee shared the idea of getting the LLM to interview a human expert, asking the human questions to verify the correctness of the specification, a form of Interrogatory LLM.

❄ ❄

Not specifically about AI - but I liked how one attendee commented that the first thing they do when consulting with an organization is to read the guidelines for their change-control board. This is the scar tissue of what’s gone wrong in the past. I’ve often said that to understand why a thing is the way it is, you need to understand the history of how it got there. This seems like an excellent way to tap into important parts of that history.

❄ ❄

My colleagues who work with modernizing legacy systems have long been rather sniffy about “Lift and Shift” - porting a legacy system to a new platform while retaining Feature Parity.

We see this pattern as a huge missed opportunity. Often the old systems have bloated over time, with many features unused by users (50% according to a 2014 Standish Group report) and business processes that have evolved over time. Replacing these features is a waste. Instead, try to muster the energy to take a step back and understand what users currently need, and prioritize these needs against business outcomes and metrics.

But this point of view was developed before LLM’s ability to port code appeared. One attendee who does a lot of work in this field said they believed that lifting and shifting to a new platform should now be always the first step in a legacy migration. The cost is no longer as formidable as it used to be, and a better environment makes further evolution much cheaper. Just don’t stop there.

❄ ❄

Several attendees were from the financial industry, and thus were immersed the problems of complex legacy environments coupled with regulatory controls and significant risk should software do something wrong with money. One of their issues is the complexity we run into when a financial product is offered in multiple jurisdictions, each with their own regulations to satisfy. There’s a lot of software complexity in deciding which jurisdiction applies, and picking the right set of rules at the right point of the workflow.

The question here is whether the rapidity of agentic programming means that we can build individual, simpler systems for each jurisdiction. We would then use LLMs to ensure consistency between them, so that as the product rules change, each system reflects that into its own environment.

A large part of software design is about identifying what is the same and what differs between various business contexts. Where things are the same, and need to be the same, we are rightly wary of duplicating code, since this increases the cost of updates the dangers of inconsistency. The interesting question is what role LLMs can play to give us new tools to tackle this.

❄ ❄

As is usually the case in gatherings like this, folks were concerned about junior developers. When we work with The Genie, our value comes from good judgment - how do we teach that? This group did have one common tool - Pair Programming. One of the key benefits of pairing has always been skills transfer, and here an experienced agentic programmer can pass on their judgment for software design and how to use the genie to get there. And the junior will often have a trick or two to share too - that fresh pair of eyes in particularly valuable in the shift to our agentic future.

❄ ❄

Historically, we use computer systems to bring order to chaotic human processes. Is AI reversing that?

❄ ❄

So much software is involved in data transformation. Those records over there need to be consumed by these APIs over here, but there are differences in how the data is structured, often due to being in different Bounded Contexts, so we have to do some conversion. Agents are particularly adept at writing this kind of transformation code, which is often more tedious than we’d like.

❄ ❄

Chaos Engineering has become a valuable technique to improve resiliency, made famous by Netflix’s Chaos Monkey that randomly breaks live services to see how well the ecosystem reacts and recovers. What would a Chaos Monkey for AI look like? Would it deliberately introduce hallucinations into a pipeline to see if sensors were able to catch them?

❄ ❄ ❄ ❄ ❄

Back at my desk

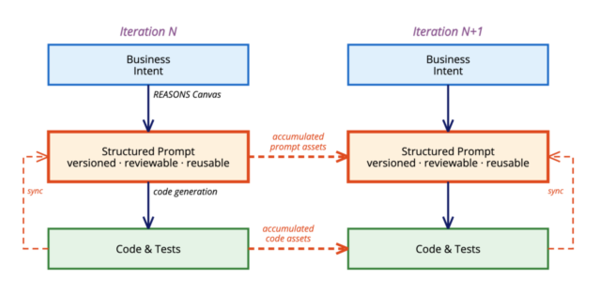

There’s been a bunch of questions about the article on Structured-Prompt-Driven Development (SPDD) that the authors answered in a Q&A section. One in particular caught my eye:

Have you considered having an agent do the prompt/spec review itself — not a human reviewing the Canvas, but an agent that reads the REASONS Canvas alongside the code diff and verifies alignment?

The reply talks about how there is an available command to do this, but there downsides. In particular one reason not to do this automatically is:

Letting humans learn. Review is also where humans learn from the AI’s choices — patterns, trade-offs, options they had not thought of. Cutting humans out speeds things up, but it blocks the long-term skill growth that SPDD is designed to protect. […] Once enough decision rules build up to give us real confidence, we may shift more of the review to the agent step by step — but the part where humans learn from the AI is something we plan to keep.

One of the ways we should judge the value of an AI tool is how much it helps us humans learn more about the world we inhabit and build.

❄ ❄ ❄ ❄ ❄

In some strange way I injured my elbow last week. No idea how, there was no event where I said “oh shit”. It just gradually started hurting and swelling. My life-long strategy to avoid sports injuries had defied me. I applied ice and ibuprofin, the swelling went down, but my range of motion got worse. I’m glad I learned to use a knife and fork in English childhood, so I normally eat with my left hand.

I noticed that that loss of range of motion occurred after I got home, when I started spending all day at the computer again. I might not use my elbow directly, but my right hand does a lot of typing and mousing. My desk set up is pretty ergonomic, with a good keyboard, a wrist rest for the mouse, and arm rests on my chair. But even so, did my computer use make my elbow get worse once I got home? I can’t imagine not using the computer, for me writing has become an unstoppable habit. But maybe I should use this opportunity to explore voice input - after all most people can speak faster than they can type.

I tried this many years ago, when a colleague told me how good voice recognition was once it trained to you. I tried it, and indeed the voice recognition, even in those pre-AI days, was very good. But it didn’t work for me. When I’m writing I rapidly type words into Emacs, but almost immediately I go back to edit them. Write two sentences, edit them, write another, re-edit the paragraph. The back-and-forth between seeing my words and thinking about them is tight - I can’t just dictate my words.

That made me reflect further. I only started using a computer for my writing in my 20s. At school I had to write longhand, and in university to type on typewriter. But those media don’t support the constant rewriting that I do now. Would I even have become a writer had the text editor not been invented?

❄ ❄ ❄ ❄ ❄

James Pritchard thinks that many developers are over-using agents at run-time in their products, when LLMs are better used as functions.

The problem with agents isn’t that they don’t work. It’s that they work unpredictably. You trade a known execution path for “autonomy” that mostly means “I don’t know what it’s going to do.” When an agent-powered feature breaks in production, you’re debugging a conversation transcript, not a stack trace.

Most “agent” use cases are actually workflows, a known sequence of steps where one or two of those steps happen to involve an LLM. You don’t need autonomy for that. You need a function call.

He points out that functions compose predictably, so if you know the workflow, then composing in a program text is better than agents figuring out how to coordinate themselves. It’s faster, and needs less tokens. It’s usually easier to deal with failures, since the scope of the interaction is smaller.

❄ ❄ ❄ ❄ ❄

Pritchard also thinks that people use skills far more than they should. He thinks people accumulate folders of markdown skill files but LLMs use them inconsistently, often missing them when they’re needed, or bloating context when they are not. Many things that should go in skills should be other parts of a harness, preferably computational. Skills should only be used with deliberate, infrequent workflows.

The skills obsession is a symptom of a deeper pattern: people reaching for configuration when they should be reaching for architecture.

“The LLM doesn’t write good tests.” Don’t write a testing skill. Are your existing tests inconsistent? Is the test setup complex? Fix those things and it’ll write good tests without being told how. Point it at a test file you’re proud of. Code is clearer than English.

[…]

The best setup is one where you barely need to configure the LLM at all. A clean codebase with clear patterns, a short project config for the non-obvious stuff, hooks for automation, and maybe one or two skills for specific workflows you run intentionally. That’s it.

❄ ❄ ❄ ❄ ❄

An oft-stated point about the rise of agentic programming is that we have to start dealing with non-determinism in our work. Of course that’s somewhat of a simplification, because some aspects of software development have long had to face non-determinism. A notable example of this is distributed systems, and a notable figure in helping us probe the truly uncomfortable waters of distributed systems is Kyle Kingsbury (Aphyr).

Last month he dropped a long article (the pdf is 32 pages) on how he sees our LLM-enabled future. The title “The Future of Everything is Lies, I Guess” betrays his lack of enthusiasm for this future.

Some readers are undoubtedly upset that I have not devoted more space to the wonders of machine learning—how amazing LLMs are at code generation, how incredible it is that Suno can turn hummed melodies into polished songs. But this is not an article about how fast or convenient it is to drive a car. We all know cars are fast. I am trying to ask what will happen to the shape of cities.

It’s worth the long read, even if it isn’t terribly cheerful. Kingsbury brings up many of worries about AI’s growth from the perspective of someone who is clearly well-informed about their capabilities.

His view is that the best response to all this is that we should stop. He wants to avoid using AIs for his writing, software, or personal life. He thinks those working for the AI companies should quit. And yet he also knows that these tools are useful, and wants to use them.

I’m both a hoper and a doomer when it comes to our AI future. Fundamentally I see any powerful technology as a big bus: we are either on it, or get run over by it. I’m onboard the bus because I don’t think putting up some barriers would stop me being crushed by its wheels. Maybe if I’m on the bus I can join some people to influence the driver a bit. I’m also very reluctant to speculate on the future outcomes of anything, let alone something as powerful as this. Did the early industrialists in the late eighteenth century have any clue what the industrial revolution they unleashed would do? While it created many harms, it also created a massive rise in the living standards of millions of people, at least those whose countries were on the bus. AI may create benefits that I can’t really dream of, although I can glimpse it when it helps a friend stave off Parkinson’s disease.

Those hopes are there, but Kingsbury’s article shines a light on the darker elements of the here-and-now, asking serious questions of responsibility

a part of my work as a moderator of a Mastodon instance is to respond to user reports, and occasionally those reports are for CSAM, and I am legally obligated to review and submit that content to the NCMEC. I do not want to see these images, and I really wish I could unsee them. On dark mornings, when I sit down at my computer and find a moderation report for AI-generated images of sexual assault, I sometimes wish that the engineers working at OpenAI etc. had to see these images too. Perhaps it would make them reflect on the technology they are ushering into the world, and how “alignment” is working out in practice.

Thu 14 May 2026 11:04

Martin Fowler

When we need an LLM to perform a complex task, we often need to feed it a

lot of context. Coming up with a design for a new feature requires

descriptions of how we want the feature to appear to the user, guidelines on

how it should be implemented, information on external systems to consult, and

so on. All this can be several pages of markdown. The obvious way to do

this is for a human to write this context, but an alternative is to use an LLM to write

this context after interviewing a human.

The way I can do this is to prompt the LLM to interrogate me. It should ask me

all the questions it needs to create this appropriate context. I can feed much

of the information it needs, and tell it other sources it needs to consult if

it can't figure those out itself. Once it's done, it can then create the

context report for another session (perhaps with another model) to carry out

the next step.

I first saw a decent description of this approach in Harper

Reed's blog. A striking element of his approach is insisting that the LLM

ask only one question at a time. (When I tried it, I found it needed to be

frequently reminded of this.)

Another way to use an interrogatory LLM is to give it a document, such as a

software specification, that captures knowledge about a domain - and then ask

the LLM to interview a human expert to determine if the document is accurate.

This is an alternative to getting the human expert to read the document to

review it. People often find reviewing hard, so a conversation with an LLM

might be more fruitful, particularly if the document isn't well-written.

Naturally we can use both of these, using one interrogatory LLM to build a

document, then using other interrogatory LLMs to review it with other experts.

The above is getting an LLM to create or assess context for a particular

use of an LLM. But the technique is more broadly applicable. I've become a

natural writer, someone who finds the process of writing an essential part of

thinking. To really understand something, I need to write about it. But

different people are different. Many folks find writing hard, often

very hard. This can be a real problem when we need to get information

out of someone's head into a form that other humans can consume. Maybe such

people would find it easier to ask an LLM to interview them than to write a

document themselves. Certainly the result will have that tang of AI-writing

that folks like me shudder at - but that's better than not having the

information itself, either due to rushed writing or no writing at all.

Tue 12 May 2026 09:30

Martin Fowler

Increasingly humans delegate writing code to agents. Will there even be

source code in the future? To wrestle with this question, we have to

understand what code is. Unmesh Joshi sees code as having

two distinct but intertwined purposes: instructions to a machine and a

conceptual model of the problem domain. He explores why it's vital

to build a vocabulary to talk to the machine, how programming languages

are thinking tools, and how this affects our future as we work with LLMs.

more…

Tue 05 May 2026 12:23

Martin Fowler

Over the last couple of months Rahul Garg published a series of posts here on how to reduce the friction in AI-assisted programming. To make it easier to put these ideas into practice he’s now built an open-source framework to operationalize these patterns.

AI coding assistants jump straight to code, silently make design decisions, forget constraints mid-conversation, and produce output nobody reviewed against real engineering standards. Lattice fixes this with composable skills in three tiers – atoms, molecules, refiners – that embed battle-tested engineering disciplines (Clean Architecture, DDD, design-first methodology, secure coding, and more), plus a living context layer (the .lattice/ folder) that accumulates your project’s standards, decisions, and review insights. The system gets smarter with use – after a few feature cycles, atoms aren’t applying generic rules, they’re applying your rules, informed by your history.

It can be installed as a Claude Code plugin or downloaded for use with any AI tool.

❄ ❄ ❄ ❄ ❄

This is also a good point to note that the article by my colleagues Wei Zhang and Jessie Jie Xia on Structured-Prompt-Driven Development (SPDD) has generated an enormous amount of traffic, and quite a few questions. They have thus added a Q&A section to the article that answers a dozen of them.

❄ ❄ ❄ ❄ ❄

Jessica Kerr (Jessitron) posted a merry tidbit of building a tool to work with conversation logs. She observes the double feedback loop involved.

There are (at least) two feedback loops running here. One is the development loop, with Claude doing what I ask and then me checking whether that is indeed what I want.[…] Then there’s a meta-level feedback loop, the “is this working?” check when I feel resistance. Frustration, tedium, annoyance–these feelings are a signal to me that maybe this work could be easier.

The double loop here is both changing the thing we are building but also changing the thing we are using to build the thing we are building.

As developers using software to build software, we have potential to mold our own work environment. With AI making software change superfast, changing our program to make debugging easier pays off immediately. Also, this is fun!

Indeed it is, and makes me think that agents are allowing us to (re)discover one of the Great Lost Joys of software development - that of molding my development environment to exactly fit the problem and my personal tastes. A while ago I wrote about this under the name Internal Reprogramability. It was a central feature of the Smalltalk and Lisp communities but was mostly lost as we got complex and polished IDEs (although the unix command line gives a hint of its appeal).

❄ ❄ ❄ ❄ ❄

Ashley MacIsaac is a musician from Cape Breton, who plays folk-influenced music (I have a couple of his albums in my collection). Google generated an AI overview that asserted that had been convicted of crimes, including sexual assault and was on the national sex-offender registry. These were completely false, confusing him with another man with the same name. MacIsaac is suing Google for defamation:

“This was not a search engine just scanning through things and giving somebody else’s story […] It was published by them. And to me, that is defamation. The guardrails were not there to prevent Google AI from publishing that content.”

MacIsaac’s point is that Google must take responsibility for what a tool it controls publishes. MacIsaac suffered genuine harm here, not just to his reputation, but he also had a concert canceled and the claims affect his performing.

“I felt that tangible fear from something that was published by a media company,” he said in an interview with The Canadian Press. “I feared for my own safety going on stage because of what I was labelled as. And I don’t know how long this will follow me.”

Too often tech companies try to dodge the consequences of their actions. There are genuine issues about the difficulties of monitoring what’s published at scale, but that’s a responsibility that they should face up to.

❄ ❄ ❄ ❄ ❄

Stephen O’Grady (RedMonk) takes a serious look at how much the big tech companies are spending on AI build-outs. The sums involved are staggering, not just in absolute terms (over $100 Billion), but also compared to the revenues of the companies involved. Firms like Amazon, Alphabet, and Microsoft are spending over 50% of their revenues (not profits). Meta and Oracle hit or pass 75% of revenues.

That level of investment would have been unthinkable a decade ago. Today, the chart suggests it’s table stakes

There is a notable exception: Apple. Here they are clearly Thinking Different, eyeballing the chart they seem closer to 10% of revenues.

❄ ❄ ❄ ❄ ❄

Most folks I talk to about agentic programming are using models in the cloud: Claude, Codex, and the like. Everyone agrees these are the most powerful models, the ones that triggered the November Inflection. But do we need to use the Most Powerful Models, particularly when we have to ship data to them, and pay handsomely for the privilege? Willem van den Ende considers an alternative, that local models are Good Enough.

Assumptions

- We are all figuring this out.

- Quality of a harness (coding agent + “skills” + extensions) can matter as least as much as the model

- Running open models and an open coding agent + custom extensions takes time, but pays off in understanding and a stable base where engineering effort compounds

- Open, local, models have (for me) crossed the point where they are good enough for daily work with a coding agent.

The post describes in detail his setup for local model work. It includes sandboxing with Nono, which is something to consider even if using a Cloud model - such powerful tools need a Zero Trust Architecture.

❄ ❄ ❄ ❄ ❄

In case you haven’t noticed, those last two fragments resonate. Apple isn’t playing the cloud AI model game, they are saving a huge amount of money, and if local models end up being the future, they’ll be looking rather wise. Van den Ende’s post led me to a podcast by Nate B Jones that argues that Apple is replaying a fifty-year old strategy here. All those years ago anyone who used a computer bought time on a mainframe, the Apple II put far less capable compute into the home and small office. From there came spreadsheets, desktop publishing, and the modern home computer - things that weren’t possible using mainframes.

He sees the rise of John Ternus as CEO isn’t merely a switch to a known insider successor - but a bet that the future of AI is sophisticated hardware in the home, office, and pocket. If Open Source models are Good Enough, then why spend money sending tokens - containing your sensitive data - to the AI megacorps?

❄ ❄ ❄ ❄ ❄

Talking of five decades in the past, it was in 1974 that Fred Brooks opened one of the most influential books in our profession with these paragraphs:

No scene from prehistory is quite so vivid as that of the mortal struggles of great beasts in the tar pits. In the mind’s eye one sees dinosaurs, mammoths, and sabertoothed tigers struggling against the grip of the tar. The fiercer the struggle, the more entangling the tar, and no beast is so strong or so skillful but that he ultimately sinks.

Large-system programming has over the past decade been such a tar pit, and many great and powerful beasts have thrashed violently in it. Most have emerged with running systems — few have met goals, schedules, and budgets. Large and small, massive or wiry, team after team has become entangled in the tar. No one thing seems to cause the difficulty — any particular paw can be pulled away. But the accumulation of simultaneous and interacting factors brings slower and slower motion. Everyone seems to have been surprised by the stickiness of the problem, and it is hard to discern the nature of it. But we must try to understand it if we are to solve it.

With the title of his recent post Kent Beck summons up that imagery as the Genie Tarpit. After explaining why skilled software development is about building both features and futures, he observes that these AI tools aren’t doing a good job of producing software with the kind of internal quality that is needed for a good future.

Here’s what I’ve observed — genies naturally live down & to the left of muddling. The “plausible deniability” task orientation of the genie leaves it claiming success even though the code doesn’t work at all. And complexity piles on complexity until even the genie can’t pretend to make progress any more.

It’s still an open question whether, or to what extent, internal quality matters in the age of agentic programming. One view is, as Laura Tacho puts it, “The Venn Diagram of Developer Experience and Agent Experience is a circle”. Well organized elements, with good naming, help The Genie understand code, so are important if we can continue to go beyond small disposable systems. The other view is that such internal quality doesn’t matter, that the galaxy brain of LLMs will make sense of the biggest bowls of spaghetti. Maybe not now, but after a couple more inflections.

That’s the fundamental question. Can The Genie evade the tar pit, or will it struggle fruitlessly against the tar’s sticky grip?

Tue 05 May 2026 12:18

Martin Fowler

In the early 1960s, Fred Brooks managed the development of IBM's System/360

computer systems. After it was done he penned his thoughts in the book The

Mythical Man-Month which became one of the most influential books on software

development after its publication in 1975. Reading it in 2026, we'll find some

of it outdated, but it also retains many lessons that are still relevant today.

The book contains Brooks's law: “Adding manpower to a late software project

makes it later.” The issue here is communication, as the number of people

grows, the number of communication paths between those people grows

exponentially. Unless these paths are skillfully designed, then work quickly

falls apart.

Perhaps my most enduring lesson from this book is the importance of

conceptual integrity

I will contend that conceptual integrity is the most important

consideration in system design. It is better to have a system omit certain

anomalous features and improvements, but to reflect one set of design ideas,

than to have one that contains many good but independent and uncoordinated

ideas.

He argues that conceptual integrity comes from both simplicity and

straightforwardness - the latter being how easily we can compose elements.

This point of view has been a strong influence upon my career, the pursuit

of conceptual integrity underpins much of my work.

The anniversary edition of this book is the one to get, because it also

includes his even-more influential 1986 essay “No Silver Bullet”.

Wed 29 Apr 2026 09:23

Martin Fowler

Chris Parsons has updated his guide on using AI to code. This is his third update, what I like about it is that he gives a lot of concrete information about how he uses AI, with sufficient detail that we can learn from him. His advice also resonates with the better advice I’ve seen out there, so the article makes a good overview of the state of using AI for software development.

I wrote the previous version of this post in March 2025, updated it once in August, and it has been linked from almost everything I have written about AI engineering since. The fundamentals from that post still hold: keep changes small, build guardrails, document ruthlessly, and make sure every change gets verified before it ships. One thing has had to move with the volume. “Verified” used to mean “read by you”. With modern agent throughput, it has to mean “checked by tests, by type checkers, by automated gates, or by you where your judgement matters”. The check still happens; it just does not always happen in your head.

Like Simon Willison, he makes a clear distinction between vibe coding, where you don’t look at or care about the code, and agentic engineering. He recommends either Claude Code or Codex CLI. He considers the inner harness provided by his preferred tools to be a key part of their advantage.

He sees verification is the key thing to focus on:

A team that can generate five approaches and verify all five in an afternoon will outpace a team that generates one and waits a week for feedback. The game is not “how fast can we build” any more. It is “how fast can we tell whether this is right”. That shifts where to invest. Build better review surfaces, not better prompts. Make feedback unnecessary where you can by having the agent verify against a realistic environment before it asks a human, and make feedback instant where you cannot.

The key role of the programmer is in training the AI to write software properly, and the most important thing skilled agentic programmers can do is pass that skill onto other developers.

And if you are a senior engineer worried that your job is quietly turning into approving diffs: it is. The way out is to train the AI so the diffs are right the first time, to make yourself the person on the team who shapes the harness, and to make that work the visible thing you are measured on. That role compounds in a way that reviewing never will.

❄ ❄ ❄ ❄ ❄

Early this month Birgitta Böckeler wrote a superb article on Harness Engineering. (That’s not just my opinion, judging by the crazy traffic it’s attracted.) Birgitta has now recorded a video discussion with Chris Ford on Harness Engineering, which is well worth a watch.

In it they focus on discussing the role of computational sensors in the harness, such as static analysis and tests.

LLMs are great for exploratory and fuzzy rules, but once you have something that really is objective, converting it to a formal, unambiguous, deterministic format can give you more assurance

Birgitta did some experiments to explore the benefits of adding sensors, including a deep dive on using static analysis. She found it’s more useful as agents can really address every warning, and don’t slack off like humans do.

❄ ❄ ❄ ❄ ❄

Adam Tornhill considers an age-old question: how long should a function be? This question is still relevent in the age of agentic programming.

AI models do not “understand” code the way humans do. They infer meaning from patterns in tokens and depend heavily on what is explicitly expressed in the code.

Research shows that naming plays a critical role. When meaningful identifiers are replaced with arbitrary names, model performance drops significantly. Current models rely heavily on literal features—names, structure, and local context—rather than inferred semantics.

Like me, he doesn’t think the answer is to think about how many lines should be in a function, instead it’s all about providing better structure. He has a good example of how a well-chosen function defines useful concepts, where a function wraps four lines of code, returning a new concept that enters the vocabulary of the program.

Functions are the first unit of structure in a codebase. They define how logic is grouped, how intent is communicated, and how change is localized. If the function boundaries are wrong, everything built on top of them becomes harder to understand and harder to evolve.

This fits with my writing that the key to function length is the separation between intention and implementation:

If you have to spend effort into looking at a fragment of code to figure out what it’s doing, then you should extract it into a function and name the function after that “what”. That way when you read it again, the purpose of the function leaps right out at you, and most of the time you won’t need to care about how the function fulfills its purpose - which is the body of the function.

❄ ❄ ❄ ❄ ❄

Many folks in my feeds recommended Nilay Patel’s post on Why People Hate AI. He thinks that many people in the software world have “software brain”:

The simplest definition I’ve come up with is that it’s when you see the whole world as a series of databases that can be controlled with the structured language of software code. Like I said, this is a powerful way of seeing things. So much of our lives run through databases, and a bunch of important companies have been built around maintaining those databases and providing access to them.

Zillow is a database of houses. Uber is a database of cars and riders. YouTube is a database of videos. The Verge’s website is a database of stories. You can go on and on and on. Once you start seeing the world as a bunch of databases, it’s a small jump to feeling like you can control everything if you can just control the data.

Software Brain views people into databases, and oddly enough, a lot of people don’t like that. Which is why so many polls reveal the negative feelings folks have about the AI movement.

Even taking the time to consider how much of your life is captured in databases makes people unhappy. No one wants to be surveilled constantly, and especially not in a way that makes tech companies even more powerful. But getting everything in a database so software can see it is a preoccupation of the AI industry. It’s why all the meeting systems have AI note takers in them now.

Patel draws a similarity that I’ve often made - that between programmers and lawyers. Lawyers who draw up contracts are creating a protocol for how the parties in the contract should behave. As Patel puts it:

If the heart of software brain is the idea that thinking in the structured language of code can make things happen in the real world, well, the heart of lawyer brain is that thinking in the structured legal language of statutes and citations can also make things happen. Hell, it can give you power over society.

The difference, of course, is that law is non-deterministic. Litigation is resolving what happens when people have different ideas about how those contracts should execute.

❄ ❄ ❄ ❄ ❄

I was chatting recently with a company who wanted to use AI to make sense of their internal data. The potential was great, but the problem was that the data a mess. People put stuff into fields that didn’t make sense, and there was little consistency about how people classified important entities. As someone commented

the hardest problem with internal data is precise, consistent definitions

You can imagine my astonishment. (i.e. none at all - this has been a constant theme during all my decades with computers.) The difficulty of getting such definitions undermines much of the hopes of Software Brain

This resonates with our relationship with LLMs when programming. Precise and consistent definitions strike me as crucial to effective communication with The Genie. These definitions need to grow in the conversation, and be tended over time. Conceptual modeling will be a key skill for agentic programming and whatever comes next. (At least I hope it will, since it’s a part of programming I really enjoy.)

❄ ❄ ❄ ❄ ❄

Patel’s article refers to Ezra Klein’s post about the new feeling in San Francisco.

You might think that A.I. types in Silicon Valley, flush with cash, are on top of the world right now. I found them notably insecure. They think the A.I. age has arrived and its winners and losers will be determined, in part, by speed of adoption. The argument is simple enough: The advantages of working atop an army of A.I. assistants and coders will compound over time, and to begin that process now is to launch yourself far ahead of your competition later. And so they are racing one another to fully integrate A.I. into their lives and into their companies. But that doesn’t just mean using A.I. It means making themselves legible to the A.I.

That legibility is the heart of Patel’s observation. That’s why I see many colleagues of mine dumping all their email, meeting notes, slide decks and everything else into files that AI can read and work with. This works to the strengths of AI, we know that AI is really good at querying unstructured information. So I can figure out what’s buried in my notes in a way that’s far more effective than hoping I’m typing the right search regex.

I’ve been using Gemini a fair bit for exactly this on the web, finding it easier to write a question to it than to throw search terms at Google. Gemini keeps a record of my past requests, and uses that to help it tune what I’m looking for. As Klein observes:

[The AI] is constantly referring back to other things it knows, or thinks it knows, about me. Sycophancy, in my experience, has given way to an occasionally unsettling attentiveness; a constant drawing of connections between my current concerns and my past queries, like a therapist desperate to prove he’s been paying close attention.

The result is a strange amalgam of feeling seen and feeling caricatured.

Like myself, Klein is a writer, and is faced by the same temptation that I have when I think about AI and writing. Maybe instead of toiling over articles, I should ask an LLM to create an AGENTS.md file that summarizes my writing style, and every few days ask it to compose an article on some subject, read it, tweak it, and then publish my erudite musings. But that’s not at all appealing to me. I want understanding to grow in my brain, not the LLM’s transient session. Writing to explain my thinking to others is how I refine that thinking, “chiseling that idea into something publishable” as Klein puts it. To have an AI write for me is to cripple my own mind.

Tue 28 Apr 2026 09:02

Martin Fowler

LLM programming assistants have demonstrated considerable value, but

mostly with individual developers. The internal IT organization in

Thoughtworks has been using them for their teams and have developed a

method and workflow called Structured Prompt-Driven Development (SPDD).

Wei Zhang and Jessie Jie Xia describe a simple example of

this workflow with details in github. This workflow treats the prompts as

a first-class artifact, kept with the code in version control, and used to

align development with business needs. They have found that developers

need three key skills to be effective: alignment, abstraction-first, and

iterative review.

more…

Tue 21 Apr 2026 16:34 CEST

Martin Fowler

Last week Thoughtworks released the 34th volume of our Technology Radar. This radar is our biannual survey of our experience of the technology scene, highlighting tools, techniques, platforms, and languages that we’ve used or otherwise caught our eye. This edition contains 118 blips, each briefly describing our impressions of one of these elements.

As we would expect, the radar is dominated by AI-oriented topics. Part of this is revisiting familiar ground with LLM-assisted eyes:

An interesting consequence of AI in software development is that it’s not only forcing us to look to the future; it’s also pushing us to revisit the foundations of our craft. While assembling this edition, we found ourselves returning to many established techniques, from pair programming to zero trust architecture, and from mutation testing to DORA metrics. We also revisited core principles of software craftsmanship, such as clean code, deliberate design, testability and accessibility as a first-class concern. This is not nostalgia, but a necessary counterweight to the speed at which AI tools can generate complexity. We also observed a resurgence of the command line: After years of abstracting it away in the name of usability, agentic tools are bringing developers back to the terminal as a primary interface.

I was especially happy to see my colleague Jim Gumbley added to the writing team, he’s been a regular source of security information for me over the years, including working on this site’s Threat Modeling Guide. Having a strong security presence on the radar team is especially important given the serious security concerns around using LLMs. One of the themes of the radar is securing “permission hungry” agents:

“Permission hungry” describes the bind at the heart of the current agent moment: the agents worth building are the ones that need access to everything. OpenClaw and Claude Cowork supervise real work tasks; Gas Town coordinates agent swarms across entire codebases. These agents require broad access to private data, external communication and real systems — each arguing that the payoff justifies it.

However, like a skier who’s just learned to turn and confidently points themselves at the hardest black run, the safeguards haven’t caught up with that ambition. The appetite for access collides with unsolved problems. Prompt injection means models still can’t reliably distinguish trusted instructions from untrusted input.

Given all of this, many of this radar’s blips are about Harness Engineering, indeed the radar meeting was a major source of ideas for Birgitta’s excellent article on the subject. The radar includes several blips suggesting the guides and sensors necessary for a well-fitting harness. I expect that when the next radar appears in six months time, that list will increase.

❄ ❄ ❄ ❄ ❄

Mike Mason looks what happens when developers aren’t reading the code.

The Python codebase Claude produced was largely working. Unit tests passed, and a few hours of real-world testing showed it was successfully managing a fairly complex piece of my infrastructure. But somewhere around 100KB of total code I noticed something: the main file had grown to about 50KB (2,000 lines) and Claude Code, when it needed to make edits, had started reaching for sed to find and modify code within that file. When I saw that, it was a serious alarm bell.

As well as the experience of “a friend”, he ponders the 500,000 lines of Claude Code after the leak.

Both things are true: there is good architecture in Claude Code, and there is also an incomprehensible mess. That’s actually the point. You don’t get to know which is which without reading the code.

His conclusion is a rough framework. Throw-away analysis scripts are fine to vibe away. Tooling you need to maintain and durable code, needs regular human review - even if it’s just a human asking a model to evaluate the code with some hints as to what good code looks like

The moment you say “I’m getting uncomfortable with how big this is getting, can we do something better?” it does the right thing: sensible decomposition, new classes, sometimes even unit tests for the new thing. It knew, it just didn’t volunteer it.

He does recommend being serious with CLAUDE.md, I don’t know if he’s tried many of the patterns that Rahul Garg has recently posted to break the similar frustration loop that he saw.

❄ ❄ ❄ ❄ ❄

Dan Davies poses an annoying philosophy thought experiment for us to consider how we feel about LLMs indulging in ghost writing.

❄ ❄ ❄ ❄ ❄

DOGE dismantled many useful things during their brief period with the wood chipper. One of these was DirectFile, a government program that supported people filing their taxes online. Don Moynihan has talked to many folks involved in Direct File, has penned a worthwhile essay that isn’t just relevant to DirectFile and other U.S. government technology projects, but indeed any technology initiative in a large organization.

Moynihan highlights:

a paradox of government reform: the simpler a potential change appears, the more likely that it has not been implemented because it features deceptive complexity that others have tried and failed to resolve.

I’ve heard that tale in many a large corporation too

One way government initiatives are different is that, at its best, it’s built on an attitude of public service

Many who worked on Direct File drew a sharp contrast with DOGE and their approach to building tech products. One point of distinction was DOGE’s seeming disinterest in public interest goals and of the public itself: “if you do not think government has a responsibility to serve people, I think it draws into question how good are you going to be at making government work better for people if you just don’t believe in that underlying principle”

The tragedy for U.S. taxpayers like me is that we’ve lost an effective way to go through the annual hassle of taxes. In addition the IRS is much weaker - it’s lost 25% of its staff and its budget is 40% below what it was in 2010. Much though we hate tax collectors, this isn’t a good thing. An efficient tax system is an important part of national security, many historians consider the ability to raise taxes effectively was an important reason why Britain won its century-long struggle with France in the Eighteenth century. A wonky tax system is also a major reason why the French monarchy, so powerful at the start of that century, fell to revolution. Indeed there is considerable evidence that increasing the budget of the IRS would more than pay for itself by increasing revenue.

Tue 14 Apr 2026 09:16

Martin Fowler

I attended the first Pragmatic Summit early this year, and while there host

Gergely Orosz interviewed Kent Beck and myself on stage. The video runs for about half-an-hour.

I always enjoy nattering with Kent like this, and Gergely pushed into some worthwhile topics. Given

the timing, AI dominated the conversation - we compared it to earlier

technology shifts, the experience of agile methods, the role of TDD, the

danger of unhealthy performance metrics, and how to thrive in an AI-native

industry.

❄ ❄ ❄ ❄ ❄

Perl is a language I used a little, but never loved. However the definitive book on it, by its designer Larry Wall, contains a wonderful gem. The three virtues of a programmer: hubris, impatience - and above all - laziness.

Bryan Cantrill also loves this virtue:

Of these virtues, I have always found laziness to be the most profound: packed within its tongue-in-cheek self-deprecation is a commentary on not just the need for abstraction, but the aesthetics of it. Laziness drives us to make the system as simple as possible (but no simpler!) — to develop the powerful abstractions that then allow us to do much more, much more easily.

Of course, the implicit wink here is that it takes a lot of work to be lazy

Understanding how to think about a problem domain by building abstractions (models) is my favorite part of programming. I love it because I think it’s what gives me a deeper understanding of a problem domain, and because once I find a good set of abstractions, I get a buzz from the way they make difficulties melt away, allowing me to achieve much more functionality with less lines of code.

Cantrill worries that AI is so good at writing code, we risk losing that virtue, something that’s reinforced by brogrammers bragging about how they produce thirty-seven thousand lines of code a day.

The problem is that LLMs inherently lack the virtue of laziness. Work costs nothing to an LLM. LLMs do not feel a need to optimize for their own (or anyone’s) future time, and will happily dump more and more onto a layercake of garbage. Left unchecked, LLMs will make systems larger, not better — appealing to perverse vanity metrics, perhaps, but at the cost of everything that matters. As such, LLMs highlight how essential our human laziness is: our finite time forces us to develop crisp abstractions in part because we don’t want to waste our (human!) time on the consequences of clunky ones. The best engineering is always borne of constraints, and the constraint of our time places limits on the cognitive load of the system that we’re willing to accept. This is what drives us to make the system simpler, despite its essential complexity.

This reflection particularly struck me this Sunday evening. I’d spent a bit of time making a modification of how my music playlist generator worked. I needed a new capability, spent some time adding it, got frustrated at how long it was taking, and wondered about maybe throwing a coding agent at it. More thought led to realizing that I was doing it in a more complicated way than it needed to be. I was including a facility that I didn’t need, and by applying yagni, I could make the whole thing much easier, doing the task in just a couple of dozen lines of code.

If I had used an LLM for this, it may well have done the task much more quickly, but would it have made a similar over-complication? If so would I just shrug and say LGTM? Would that complication cause me (or the LLM) problems in the future?

❄ ❄ ❄ ❄ ❄

Jessica Kerr (Jessitron) has a simple example of applying the principle of Test-Driven Development to prompting agents. She wants all updates to include updating the documentation.